Causal Inference using the Potential Outcomes Framework

The power inside "what if" through analysis for alternative scenarios.

"In God we trust. All others must bring data."

—W. Edwards Deming (1900-1993), American economist and statistician

Economics fundamentally concerns itself with cause and effect. When policymakers ask whether minimum wage increases reduce employment, or whether college attendance boosts lifetime earnings, they pose causal questions that demand rigorous answers. Yet establishing causation proves far more treacherous than intuition suggests. The Potential Outcomes Framework (or Rubin Causal Model/RCM), developed by statisticians Donald Rubin and Jerzy Spława-Neyman, provides the mathematical foundation for navigating this challenge.

The framework's core insight transforms woolly discussions about "what causes what" into precise mathematical statements about counterfactual comparisons. It forces researchers to acknowledge what they can never observe: the alternative reality where circumstances differ. This isn't merely academic precision — it's the difference between policies grounded in rigorous evidence and those built on wishful thinking. When politicians claim their favored interventions "work," the Potential Outcomes Framework provides the analytical machinery to separate genuine effects from spurious correlations.

This article examines how this framework revolutionized causal thinking in economics. First, we explore the fundamental problem that makes causal inference so challenging. Second, we formalize the mathematical structure that clarifies what we can and cannot identify from data. Third, we demonstrate how randomized experiments solve core identification problems. Finally, we show how the framework guides analysis when perfect randomization proves impossible, offering a rigorous foundation for policy evaluation even under real-world constraints.

The Fundamental Problem of Causal Inference

Consider a seemingly straightforward question: Does attending Harvard University increase lifetime earnings? Observing that Harvard graduates earn more than community college graduates tells us little about causation. Perhaps those admitted to Harvard possess unmeasured talents that would generate high earnings regardless of educational choice. The challenge lies in constructing a meaningful comparison between what happened and what would have happened under different circumstances.

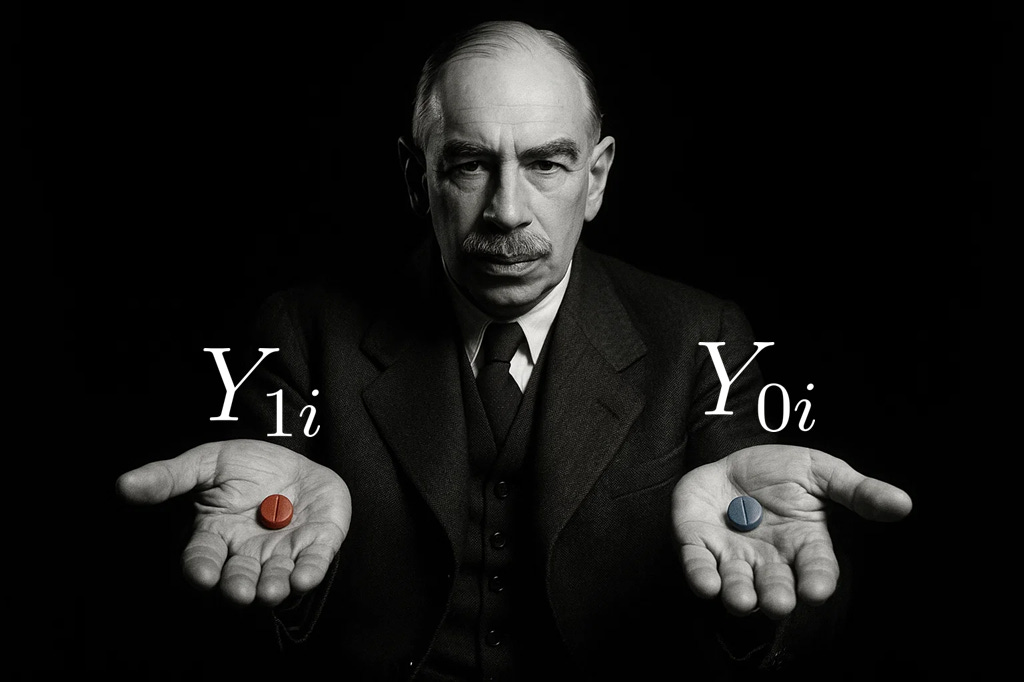

The Potential Outcomes Framework formalizes this challenge by recognizing that every individual harbors multiple potential outcomes, though we observe only one. For person i, we define:

The causal effect for individual i equals the difference between these potential outcomes:

This formulation appears deceptively simple, yet it immediately reveals the core difficulty: we can never observe both potential outcomes for the same person. If someone attends Harvard, we observe Y1i but never witness Y0i. If they choose community college, we observe Y0i but remain forever ignorant of Y1i. Rubin termed this the "fundamental problem of causal inference" — the impossibility of observing the same unit in both treated and untreated states simultaneously.

This problem transcends mere data limitations. Even with unlimited resources and perfect measurement, the counterfactual outcome remains inherently unobservable. We cannot rewind time and replay someone's life under alternative circumstances. The missing counterfactual represents not a temporary empirical constraint but a permanent feature of reality that any serious causal analysis must confront.

From Individual to Average Effects

Since individual treatment effects remain unidentifiable, researchers typically focus on average effects across populations. The most important of these aggregates include:

Average Treatment Effect (ATE)

The expected difference in outcomes if everyone received treatment versus if no one received treatment.

Average Treatment Effect on the Treated (TOT)

The average effect among those who actually received treatment.

where Di indicates treatment status (1 if treated, 0 if not).

These distinctions carry profound policy implications. If Harvard admission slots are scarce, policymakers might prioritize the TOT — the effect on those who actually attend — over the hypothetical ATE that includes individuals who would never enroll regardless. The choice between these parameters depends crucially on the policy question at hand and the constraints facing decision-makers.

The Selection Problem

The challenge becomes acute when we attempt empirical estimation. Suppose we naively compare average earnings between Harvard and community college graduates:

This "observed difference" can be mathematically decomposed:

The first term

represents the genuine treatment effect on the treated.

The second term

captures selection bias: the difference between what Harvard attendees would have earned at community college versus what community college attendees actually earned. If Harvard systematically admits students who would excel regardless of institutional choice, this selection bias will be substantial and positive, leading to dramatic overestimates of Harvard's causal impact.

Selection bias pervades observational studies across economics. High-earning individuals choose different neighborhoods, consume different products, and pursue different careers partly because their underlying characteristics — many unobservable to researchers — predispose them toward both high earnings and particular choices. Simply comparing outcomes across groups conflates genuine causal effects with these pre-existing differences, rendering most naive comparisons misleading.

The Randomized Solution!

Randomization provides an elegant solution by ensuring that treatment assignment becomes independent of all potential outcomes. When we randomly assign treatment Di, we guarantee:

meaning that all potential earnings are independent of treatment.

This independence condition eliminates selection bias entirely. Random assignment creates treated and control groups that are identical in expectation across all characteristics — both observed and unobserved. The selection bias term vanishes:

allowing the observed difference in outcomes to equal the true average treatment effect:

This result explains why randomized controlled trials occupy the pinnacle of the evidence hierarchy. When implemented properly, randomization transforms an inherently unidentified causal question into a straightforward comparison of means. The resulting estimate possesses a clear causal interpretation without requiring additional assumptions about unobserved confounders or selection processes.

Real-World Applications

The RCM, has been extensively applied across various disciplines to estimate causal effects from observational data. Below are examples from peer-reviewed studies that explicitly utilize this framework:

Natural Experiments in Health Policy

Zaslavsky (2021)1 discusses the application of the RCM in health policy research, particularly in the context of natural experiments. The study emphasizes the importance of unconfounded treatment assignment and the challenges posed by the lack of control over treatment assignment in natural experiments. By leveraging the model, researchers can better infer causal relationships in such settings.

Causal Inference in Time Series Analysis

Menchetti et al. (2021)2 propose the C-ARIMA approach to estimate causal effects in time series settings under the RCM. The study formalizes assumptions necessary for defining and estimating causal effects, demonstrating the method's applicability through simulations and an empirical application assessing the impact of a price reduction on supermarket sales.

Neighborhood Effects

Ludwig et al. (2012)3 reference Rubin's formative work to justify an analysis of the Moving to Opportunity experiment. Random housing voucher assignments help isolate the causal impact of neighborhood environments, within a Rubin Causal Framework mindset.

These examples illustrate the flexibility and rigor of the Potential Outcomes Framework in addressing causal questions across diverse empirical contexts. By explicitly defining counterfactuals and grounding inference in clearly stated assumptions, the RCM provides a unifying structure for causal analysis.

When Randomization Fails: Non-Compliance

Even in randomized experiments, complications arise when treatment assignment differs from treatment receipt. For example, in the Moving to Opportunity study, not all families offered a housing voucher actually used it to relocate. This phenomenon, known as non-compliance, creates a disconnect between the intended treatment (Zi, the offer) and the actual treatment received (Di, moving to a low-poverty neighborhood).

The Potential Outcomes Framework accommodates this by distinguishing between different behavioral types:

Always-takers

Move regardless of whether they are offered a voucher.

Never-takers

Do not move regardless of whether they are offered a voucher.

Compliers

Move if and only if they are offered a voucher.

Defiers

Do the opposite of their assignment.

Under the assumption that defiers do not exist, we can identify the Local Average Treatment Effect (LATE) — the average causal effect of treatment for the compliers:

Since compliers cannot be observed directly, we estimate LATE using the observed differences in outcomes and treatment uptake between the randomized groups:

Here, the numerator captures the intent-to-treat effect (ITT) — how much the offer of treatment affects outcomes. The denominator captures the first-stage effect — how much the offer changes actual behavior (i.e., treatment take-up). Their ratio recovers the causal effect for compliers, the subgroup whose behavior is influenced by the instrument.

This quantity is often directly relevant for policy. If policymakers are concerned not just with making offers, but with changing behavior, then the LATE is the appropriate parameter to estimate. It tells us: What is the average effect of the treatment among those who actually respond to the intervention?

Conclusion

The Potential Outcomes Framework provides economics with rigorous foundations for causal thinking. By formalizing the distinction between correlation and causation, it transforms vague debates about "what works" into precise questions about specific counterfactual comparisons. This mathematical discipline proves essential for policy evaluation, forcing researchers to acknowledge uncertainty and design identification strategies that yield credible causal estimates rather than mere correlational speculation.

The framework's lasting contribution lies not in solving all problems of causal inference, but in clarifying exactly what problems we face. It reveals why naive comparisons mislead, why randomization works, and why quasi-experimental methods sometimes succeed in recovering causal effects from observational data. These insights prove invaluable for policymakers navigating complex interventions with limited budgets and high stakes.

In an era when politicians routinely claim their preferred policies "work" based on cherry-picked correlations, the Potential Outcomes Framework provides essential intellectual infrastructure for distinguishing genuine effects from wishful thinking. It cannot guarantee perfect knowledge, but it can definitively identify when causal claims lack sufficient evidential support. For a discipline charged with guiding consequential policy decisions, such analytical clarity represents not mere academic refinement but essential democratic machinery.

Zaslavsky, A. M. (2021, June). Exploring potential causal inference through natural experiments. In JAMA Health Forum (Vol. 2, No. 6, pp. e210289-e210289). American Medical Association.

Menchetti, F., Cipollini, F., & Mealli, F. (2021). Estimating the causal effect of an intervention in a time series setting: the C-ARIMA approach. arXiv preprint arXiv:2103.06740.

Ludwig, J., Duncan, G. J., Gennetian, L. A., Katz, L. F., Kessler, R. C., Kling, J. R., & Sanbonmatsu, L. (2012). Neighborhood effects on the long-term well-being of low-income adults. Science, 337(6101), 1505-1510.